HAProxy is an open-source project [http://www.haproxy.org/] that allows you to implement a high performance TCP/HTTP(S) load balancer without paying for dedicated hardware like F5, Cisco or Barracuda and the licences attached to it. HAProxy implements a very large feature set and is a very good fit for both low and high load scenarios where you want or need to implement high availability.

First things first – What is Load balancing?

Load balancing is a technique used to allow load distribution between several application servers.

This technique will allow you to spread the load of the application between multiple servers, thus, reducing reliance on a single server and improving performance. This brings multiple advantages, such as:

- Performance – Spread the load between servers

- Scaling horizontally and not vertically – Add more servers (more)

- Reliability – By using two or more application servers

- Serviceability – You can take servers down for patching without downtime

How does HAProxy work?

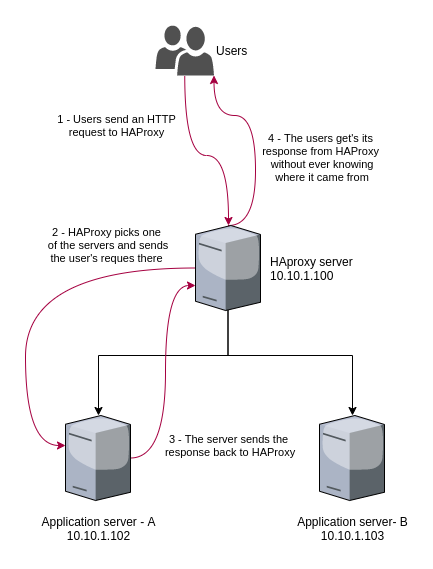

On a very high level, HAProxy acts as the entry point to your service [service is anything you need to load balance, like your web application]. This single entry point will be responsible for forwarding your traffic to the server(s) you declared to be responsible for serving that traffic. This is way easier to understand if you use an example so let’s jump right into it:

You have an HTTP application that returns the hostname of the server it is running on [dumb application but effective to show load-balancing]. Let’s see how this would look like:

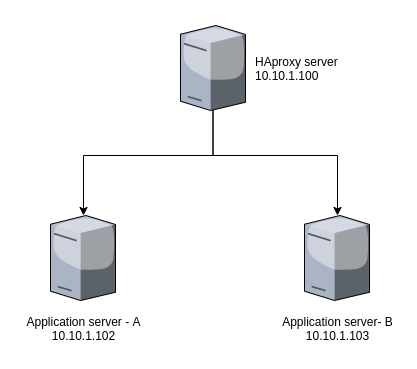

In this simple case [Image 1] we implement one HAProxy server as an entry point to our application servers. This means, if we want someone to reach our application, we need to provide them with the HAProxy server IP address.

Let’s look at how this looks like in terms of HAProxy config:

defaults

mode http

frontend main *:80

default_backend app

backend app

balance roundrobin

server appA 10.10.1.102:5000 check

server appB 10.10.1.103:5000 check

What this means:

First, lets define what a “frontend” and a “backend” are:

- Frontend is an HAProxy concept to represent the entrypoints for HAProxy. A frontend defines the IP addresses and ports HAProxy listens for client traffic to forward to your application

- Backends are the servers that serve your application: Nginx, Apache, IIS, Tomcat and so on.

Our config file implements the scenario we described above:

- One entry point: a frontend, named main, that listens on all IP addresses, on port 80

- One set of servers that can handle that traffic: a backend, named app, made of a couple of servers [appA and appB] which will be listening on their respective IP addresses, on port 5000. We are also stating we want to check that each server is able to get traffic — the ‘check’ entry

This will give us a “cool” app we can query using our HAProxy entry point, getting a response from each of the servers:

exoawk@pc-1: http 10.10.1.100 HTTP/1.0 200 OK Connection: keep-alive Content-Length: 8 Content-Type: text/html; charset=utf-8 Date: Mon, 13 Apr 2020 00:42:14 GMT Server: Werkzeug/1.0.1 Python/2.7.5 Server B

exoawk@pc-1: http 10.10.1.100 HTTP/1.0 200 OK Connection: keep-alive Content-Length: 8 Content-Type: text/html; charset=utf-8 Date: Mon, 13 Apr 2020 00:43:25 GMT Server: Werkzeug/1.0.1 Python/2.7.5 Server A

Here is what happened here:

This is just a very simple example but HAProxy is used in various, much more complex, scenarios. Here are a few examples:

- You can setup HAProxy to redirect your visitor from HTTP to HTTPS — This has become more and more important as browsers are now marking HTTP traffic as unsafe

- HAProxy can be used to balance requests across various servers using other strategies like hot/cold or least connection to help balance the load in other ways

- TCP load-balancing is also easy to achieve with HAProxy so you can use it to sit in front of your email server or database servers with the right settings. Even for those who require a master/slave config

We will come back to this in the future to show how HAProxy can implement these, and other architectures. Stay tuned!